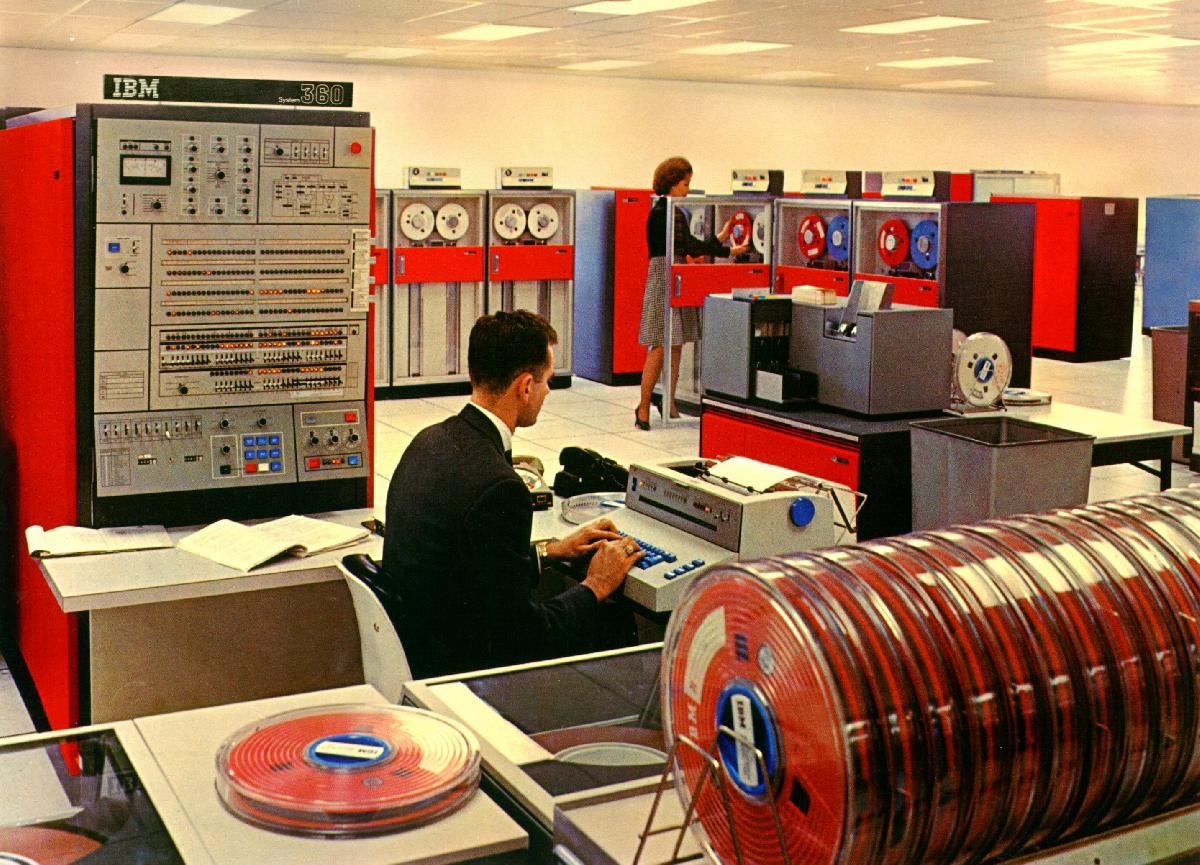

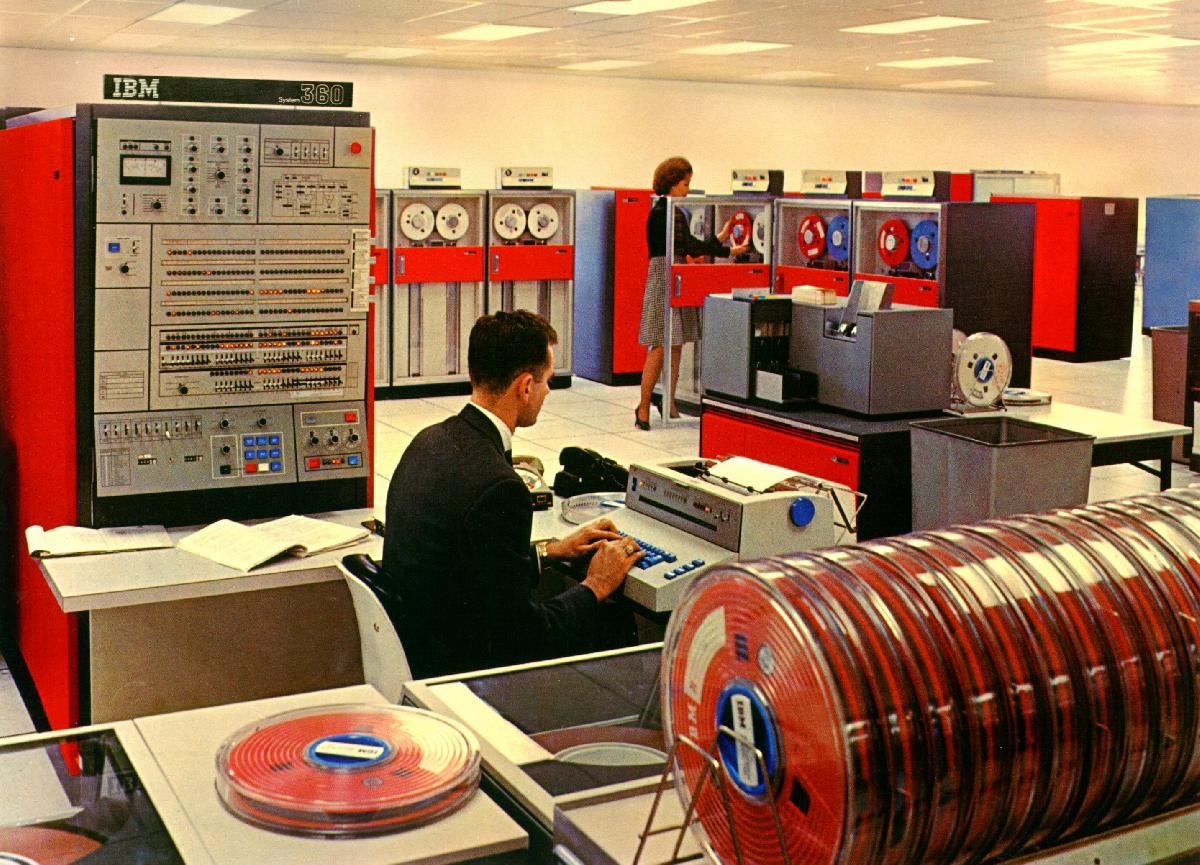

Remember the digital computer circa 1963? There were banks of flashing lights and spinning reels of magnetic tape drives. Able to do anything with sufficient programming, we looked forward to an age where almost anything could be done by a computer, efficient and infallible. There was a television show called "The Twenty-First Century" where Walter Cronkite extolled the virtues of our technology future and the computer was a central theme.

There is a growing sensation that something has gone wrong. I find it sad that what was obvious in my circle of friends in 1982 is just becoming visible to the so-called experts in the field. Lawyer jokes are being replaced by programmer jokes. The recurring theme of computer conversation in the twenty-first century is how fallible computers are.

The first stage in solving a problem is recognizing that a problem exists. The computer does about as much for our society in 2003 as it did in 1973. We may use computers more than we did then, but Microsoft Word does not produce better documents faster or cheaper than an IBM Selectric typewriter, spreadsheets do not produce better accounting or data analysis than paper charts or punched cards, and PowerPoint presentations do not communicate better than hand-drawn viewgraph or slide presentations. If we count the benefits of e-mail and the World Wide Web and we discount the cost of unreliable software, then I feel it is safe to say that the digital computer does as much for us as it did thirty years ago, neither a lot more nor a lot less.

We certainly spend more on computers. I don't have actual employment records, but the computer community in the United States seems to have grown from about six thousand to six million. (This is the programming and service community, almost all of us are users in one form or another.) Since the computers themselves cost a lot less, let us settle for a factor of one hundred. That is, we spend 100 (20 dB) times as much on computer and computing now.

The computers are certainly better and cheaper. The hardware people are making giant strides and every time we think we're getting close to the limits, somehow the technology engineers find a way to do more for less. I would argue that our computer hardware is one thousand times for cost effective in computing speed, memory, and disk storage space. That is, we get 1000 (30 dB) times more computing power for the same cost now.

Finally, we are solving the same problems now as then. We are computing, analyzing, and sorting data just as we did thirty years ago. I would argue that the computer pioneers, and those who paid them, would not have invested so much without a firm conviction that this was work that would endure. The tasks that we are doing with computers, our ability to reuse the work done in the past, should make those problems one hundred times easier. That is, those who program have a job 100 (20 dB) easier now.

Doing the full accounting of this analysis, we have lost about 10,000,000 (70 dB). To put things into perspective, that 70 dB factor is the difference in light intensity between the bright daylight sun and a crescent moon. When Arthur C. Clarke compared the reality of computing in 2001 to the computer HAL in his book 2001: A Space Odyssey, he pointed out how wonderfully small and powerful computers were but how disappointing computer programming had become.

A computer professional who loses 1 dB, 26 percent, of his salary is upset. But the profession has lost 70 dB, 99.99999 percent, of its ability to deliver the goods, and people are just now complaining about it. I think it is safe to say that something is wrong.

So we recognize the reality, computers are not fulfilling their promise, not delivering the goods, not working as planned.

In his Hitchhiker's Guide to the Galaxy books, Douglas Adams wrote about Frogstar, a once-thriving planet that had developed an obsession about shoes so they spent more and more of their economy on shoes. As people insisted on more and more footwear, the quality of the shoes declined and they wore out faster so yet more people turned to shoe production. Eventually, the entire economy of the planet was devoted to producing shoes and it still was not enough. The planet reached the shoe event horizon, its economy collapsed entirely, and it became a barren wasteland.

We are reaching the software event horizon. Somehow we have employed more and more people to write software of less and less quality to satisfy an insatiable market for computer programs. As these programs perform more poorly than their predecessors, we find ourselves buying upgrades more frequently, and the demand for programmers increases to compensate for their lower effective productivity.

Programming used to be a fine art, something reserved for a few specialized craftsmen. A programmer was expected to be fluent in the specific language of his computer and in the general language of mathematics. Conversion from base-ten to base-eight (octal), base-sixteen (hexadecimal), or base-two (binary) was a natural notion. The rarefied notions of what is today called "complexity theory" were normal concepts in algorithm design at the computer center.

Today we have a different programming community. It is considered reasonable to be a computer programmer without proficiency in mathematical or algorithmic reasoning. I find myself explaining to self-proclaimed programmers why a system with two yes/no inputs cannot discern and produce five different outcomes. I find myself explaining distinctions, getting resistance that "I already know that," finally getting the idea across, and still getting software that produces the wrong answer.

I find myself dealing with smart people who are comfortable not knowing how their systems work. When two components interact in an unforeseen and undesirable way, I want to know which elements of the system are causing the clash. I make changes in the system to diagnose the problem so I can prevent a recurrence. I find members of the new community make changes in the system until the problem goes away and never mind if it will just recur a few days later with new input data.

We have a tool user community who expects all tools to be "dumbed down" to the lowest level. We used to say making a tool foolproof would create a tool only a fool would want to use. Spending a month writing program so that a thousand users can save a day learning how to use it sounds very efficient, but it is often a false economy. The community of people using a program are more effective if they understand something about the program. If a thousand users save a day learning how to use a program and then double the time it takes them to use the program because of that ignorance, then we spend more programmer time to spend more user time.

I don't know how many people were actually involved in programming computers in 1963 or in 2003, but a leap from 6000 to 6,000,000 seems about right, at least here in the United States. But what increase in productivity is represented by the thousand-fold increase in body count?

I would argue that in programming, like most things, there are a few people who have the knack. On any sports team, aren't there one or two people who seem to have a wealth of talent? How many pilots at the airport fly like Chuck Yeager or Bob Hoover? Of the tens of thousands of kids taking violin lessons, how many play like Jascha Heifetz?

I would also argue that in programming, unlike most things, most of what gets done gets done by people with the knack. One athlete does not make a team, one pilot is not an airline or a fighter squadron, one musician does not make an orchestra, but one good programmer can do enormously more than a less capable team. In spite of all the babble about not being able to get a baby in one month by impregnating nine women, most people want to believe that team efforts produce the best results. Here in the United States we gear our entire corporate lingo around teams and teamwork.

While cooperation is a good thing, software is a natural monopoly of talent. One good programmer can out-produce thousands of not-so-good programmers. The extra overhead of group interaction, defining interfaces and managing timelines, makes a single good programmer more productive than thousands of others.

In short,

the 6000 people who are attracted to computers and computing

as it was presented in 1963

represent much, perhaps most, of the talent in programming.

Expanding the crowd a thousand-fold to 6,000,000

might increase the total talent tenfold.

Expanding the crowd to the entire world of 6,000,000,000

might increase the total talent another tenfold.

And that's it—there is no more programming talent

to be found on this planet.

Like oil or zinc or diamonds,

once we find all the good programmers in the world

there are no more.

Let us consider the job of writing a computer program

to do a job for somebody else.

In 1983 I wrote a program called AUTOGROW

that calculated growth of cellular telephone systems

to meet growing demand.

Using expertise I accumulated over months

of working with the best cellular engineers,

it took me a week to write the algorithms

for the first version of AUTOGROW.

I wrote a programmer-style interface

that asked seven questions of the AUTOGROW user,

parameter inputs.

Because I had a small community of

knowledgeable and friendly users,

I could stop there.

The next six months of AUTOGROW effort

went into better algorithms,

displaying cellular layouts so people could understand them,

and doing studies for system and financial planning.

The next phase of user-interface

would be a menu-driven system

that guides a user through the program parameter choices.

A menu interface would have taken weeks to write,

going over the choices,

figuring out the options,

and working with users to make the system "friendly."

The final phase of user-interface

would be a graphical user interface (GUI),

the sort of point-and-shoot

system that is popular today.

That would have taken many months to design, program, and test.

And when the GUI was all done and perfected,

at best it would give the AUTOGROW graphical user

the same capability as the seven dorky questions

that AUTOGROW users answered for

the fifteen years of its life.

I call it the UI pyramid.

Designing a programmer-friendly interface takes one unit of effort,

designing a text-based user-friendly interface takes ten units,

and designing a post-modern graphical interface takes 100 units.

The GUI that took 100 times as much effort to produce

makes it easier for people who do not understand.

But rather than squandering

very-scarce programming resources

to cater to people who do not understand,

wouldn't it make sense to educate those users

so they have a sense of what the program is doing?

Then we could produce ten times as many useful programs.

There is a time for graphics.

Finding something on map

or seeing a statistical relationship

is usually easier with a graphical display.

To paraphrase the old cliche,

a computer picture is worth 1024 words.

But when the only purpose of a graphical interface

is to make things pretty,

then maybe we should skip it.

GUIs are a little bit faster for novices

and a lot slower for experienced users.

Why expend extra resources

to slow down the people

who use a program the most often?

There is another side to the resource-squandering equation

of graphical interaction.

A text-based interface can be designed

to work from a script of input commands.

The command script can be stored in a file.

When a DOS or UNIX user calls and asks how to do something,

the helper can send a command file.

When a Windows user calls and asks the same question,

we have all heard the conversation from the cube next door:

"Go to the desktop and double-click on the icon.

Do you see the ‘file open’ bar?

Okay, click on that and look for ‘properties’

and right-click on that.

See the waving bunny rabbit?

When he turns purple, double click on the ears

and wait for the menu to come up."

Come on guys,

we waste so much time on this kind of frustrating communication,

can't we put that into a script file?

We are reaching the software event horizon,

if we are not there already.

Every resource we can find,

every smart person we know,

is busily generating software

to satisfy an insatiable demand.

There is a saying used to justify

many of the truly awful things

in the post-modern era.

If you do things the way you used to do them,

then you will get things the way you used to get them.

We used to get ten million times (70 dB)

more efficacy from our computers than we do now.

It's time to do some things the way we used to do them

to get the results we used to get.

Get smart young people interested in programming.

There is a pool of innate talent out there

in the world's young people.

In spite of how dumb they may seem to us,

I believe today's young people

have just as much genetic potential

to be smart, effective, and useful human beings

as we did when we were their age.

(And don't forget that we did a few dumb things in our day.)

I remember the computer projects I did as a high school student:

writing a program to play Nim

and another to generate mathematical

hexaflexagons,

both with "graphical" displays on a teletype terminal,

When our Latin teacher gave us an assignment

to scan many pages of ancient Roman poetry,

to mark each syllable as long or short,

a friend and I

enumerated the specific rules and wrote a program to do it

so we could type in the poetry and the computer would

type it back with long and short marks on top of the text.

My final high school programming project

was a program that would play the card game of Hearts.

All of these were written in

BASIC

using a teletype terminal with round, gray, elephant-foot keys

and a 110 baud modem.

Today's young computer users

download and learn to use graphics programs,

design intricate and sophisticated web pages,

and play elaborate computer games.

While all of these exercise their minds,

these are fundamentally different

from the programming challenges we chose.

Specifically,

these newer intellectual activities

do not lead their participants into

the programmatic and computer-organized thinking

that produces decision-support software,

or solid, robust, simple programs in general.

Design tools for smart people.

In the electronic, computerized office

of the twenty-first century

we have surrounded ourselves with a host of

computerized office tools

designed for users

who do not work well with computers.

It is an attempt to make an IBM personal computer

work like a MacIntosh.

The whole mouse concept went the wrong way,

in my opinion.

It was designed as a high-tech solution at Xerox,

a three-button screen pointer matched to a five-button keyset

for eight bits straight into the machine.

The skilled mouse-cum-keyset user

knew the American Standard Code for Information Interchange (ASCII)

and could input text directly.

The keyboard was used for large blocks of contiguous typing,

such as the text I'm typing now.

Editing was done with mouse and keyset.

Then the mouse was dumbed down to a point-and-shoot interface

(or grope-and-grunt as I call it)

that makes it easy for dumb people

(who don't know what is going on)

to use the system.

The trouble is

it doesn't make it any easier for smart people than dumb people.

As the dumb people learn the system and become smart people,

even their efficiency is diminished

by the claimed-easy-to-use, graphical interface.

At the text level,

we have systems like Microsoft Word.

It may be intuitive for somebody who has never

used a computer to generate italics

by leaving the keyboard, grabbing the mouse,

groping for the

[I]

box.

It may be more intuitive,

the first three times,

than typing

<i> in HTML

or \it in TeX.

By the fourth or fifth time,

the extra time is annoying

and we learn the Word shortcuts

including

control-I for italics.

But even if I learn the shortcut,

I have to go through all of them

each time I type a formula like

Eb/N0

because it is difficult or impossible

in the point-and-shoot world

to define abbreviations or to make global substitutions.

This is more than a preference

of one tool over another.

Once we understand that it is the smartest 0.1 percent

doing most of the useful work,

we should realize that it is

not only desirable but essential

to use tools that use their time efficiently.

This goes far beyond Microsoft Word

and beyond Excel, PowerPoint, Visio,

and all the other tools designed for novices

(and designed to keep them that way).

This mindset also applies to software development systems,

so-called integrated development environments (IDEs),

that makes developing software easier

for people who do not know how to write programs.

Pardon my pedantry,

but people who don't know how to write programs

should get a text editor, a command-line compiler,

and a good book and learn how to write

a computer program to do a job.

There are tools like

make and

grep

that make the job easier

for both dumb and smart people.

Make systems last a long time.

The investment in computer programming,

like any other high technology, is large.

It was large in 1973 to pay for mainframe computers

to be purchased and maintained

and it is large in 2003 to pay for large

programming and infrastructure-support staffs.

The break-even payback period for any given piece

of computer software

depends on its application and user community,

but I expect it takes years for a program

to pay for itself.

The same goes for the effort of learning

how to use a user interface.

Consider the investment in writing

a paper in Word or a spreadsheet in Excel.

Now consider the likelihood that

this paper or spreadsheet will still be useful

in ten years.

By having systems change file formats

and user interfaces every six months,

software vendors pad their own nests

at the enormous expense of their user community.

Continuing to patronize these vendors,

to use these software packages for information

more easily and effectively managed in simpler ways

is irresponsible.

My own record has been phenomenal in this regard.

AUTOGROW,

my first real computer program in the workplace,

lasted fifteen years

serving various purposes in cellular radio telephony.

My airline planning tools written from 1990 through 1994

almost all enjoy frequent use in 2003.

A graphical tool I wrote one afternoon in 1999

for analyzing an interlocking

(the railroad term for multiple track intersections)

is still in regular use.

The fleet assigner I wrote in 1990 was made obsolete

by mathematical software breakthroughs a few years later,

but its data organization still stands

as the foundation of the newer models.

I'm not sure I know all I did right in writing these programs,

but I am sure that my attitude,

my approach to programming and system design,

and my choice of tools

have a lot to do with it.

Put program structure into the program, not the tools.

The invention of the

COmmon Businessman Oriented Language (COBOL)

for accounting software

and the FORmula TRANslation (FORTRAN) language

for mathematical computation

during the 1950s

set the stage for decades of computer programming.

These languages say what needs to be said

with a small vocabulary exquisitely used.

Since that time,

the programming community embarked

on a quest for new and better languages.

They gave us

ALGOL, APL, BASIC, C, C++,

Forth, InterCal, Java, Modula, Pascal, SNOBOL, SQL,

and Visual Basic.

Many of these languages add value

to the computing and programming universe,

BASIC for introducing young people (including myself) to computing,

ALGOL for expressing algorithms,

InterCal for creating the mindset of post-modern programming,

but they are not tools I would use every day.

To work with the post-modern software community,

I use a carefully engineered subset of C

that looks like FORTRAN.

For the work I have done and cherished,

FORTRAN says what should be said

just as Shakespeare's English has said what needs to be said

for five centuries.

Writing algorithms in C++, Java, or Visual Basic

has the same appeal

as writing sonnets in Esperanto.

Think what a wonderful world we would have

if half the energy spent on new languages

had been spent on writing better programs

in the languages that we already had

that work so well.

I'm no Shakespeare,

but my FORTRAN/C source communicates on several levels.

The intricacy and complexity of my programs

is woven into the code that I write

rather than hidden in the language itself.

This is more than a preference

of one tool over another.

Once we understand that the post-modern languages

obfuscate rather than illuminate,

we can return to a

style

of programming

that builds tools to last for years

and to adapt to new user needs for decades.

I would also argue

for well written software in simpler languages

on the basis of performance.

In today's world of faster computers

and larger programming budgets,

the notion that C++ runs thirty times slower than FORTRAN

and takes at least that much more development time

seems like worrying about gas mileage

with today's low oil prices.

Instead of seeing faster computers

as an excuse for dumbed-down tools and sloppy programming,

I have seen faster computers

as an opportunity to solve harder problems

with more intricate algorithms.

Have computer users become computer literate.

Our customers in the programming world

are the users of our software.

As these customers clamor for simpler interfaces,

we provide dumbed-down, graphical interfaces

that make it easier for novices to use our software

at the expense of smart or experienced users.

This moves the programming effort

to the wide base

of the UI pyramid

where most of the effort adds comfort rather than performance.

My own customers soon learned that

having ten times as much performance

was comforting enough.

What constitutes computer literacy?

In the old days,

it was finding the computer center,

feeding a deck of punched cards into a card reader,

browsing a magazine while the computer did its thing,

and reading output on broad line-printer paper,

usually with green and white stripes across the page.

It usually entailed using a keypunch

to type input parameters that went into the card reader

at the end of the deck after the source code

of whatever was running.

In the more-recent old days,

it entailed being using a command line interface,

being able to create, copy, and delete files,

knowing about directories,

and using a text editor to enter data and to write command scripts.

This was the case on machines and operating systems

from International Business Machines (IBM),

Digital Equipment Corporation (DEC),

Hewlett Packard, and so on.

Later on, UNIX and DOS followed the same paradigm.

So what has changed

other than the expectation

that today's computer users

are too stupid to type commands

and too lazy to use a text editor?

The atomic elements of files, command lines, and text editors

form a basic vocabulary of using a computer.

I have augmented that basic vocabulary

with menus and graphics,

but I never lost the fundamentals in the process.

Other programmers have replaced the basic vocabulary

with menus and graphical interfaces

that keep their users in the dark.

The result is that these users now have no idea

how to get to the essential elements of their own work.

This describes the Microsoft Office suite of programs

as well as what I have seen in the

Apple MacIntosh.

This is more than a preference

of one tool over another.

Once we understand that files, commands, and editors

are the fundamental tools,

then we can build user interfaces

with tremendous power.

When we try to take away the basics,

the results are

predictably bad.

Insist on excellence.

This sounds like one of those post-modern

business clichés,

like "keep focused"

or "work as a team,"

but it is a great deal more than

the mindless use of a word like "quality."

It is an attitude, a mindset,

in designing and writing programs.

When I was a youngster in modern times,

the computer did not make mistakes.

When something went wrong,

it was attributed to

"human error."

The movie

"Dr. Strangelove or

How I Learned to Stop Worrying and Love the Bomb"

made macabre fun of that expectation of perfection.

The one thing that never occurred to anybody

in that film

is that the software might not work as planned.

Today's computer community in post-modern times

has quite a different expectation.

If it doesn't work,

then there must be a computer involved somewhere.

The notion that standards are followed

well enough so a user

can read the manual, use a software tool,

and get it right the first time

is absurd today.

When a user finally

figures out all the tricks and gets it right,

the new release of the tool breaks those workarounds

so the user is back to figuring it out all over again.

Comparing the modern TeX to the post-modern Word

for document preparation seems silly,

but there is still a community of Word users

even after decades of abuse.

This is more than a preference

of one tool over another.

Once we understand how much effectiveness we lose

using inferior tools,

it becomes imperative

that we migrate away from sloppy suites of fancy tools

and

learn to use simpler tools that work reliably and well.

Much of what is wrong with post-modern computers and computing

is no accident.

There is a large community of so-called

Information Technology (IT) professionals

who make their living

from the inadequacies of the equipment they serve.

In some sense they are no different

from those who service our automobiles,

people who profit from the fallability of our machines.

But there is a charlatan aspect to today's IT community

and computer consumers are being sold a bill of goods.

If computers worked as well as promised,

then most of the IT community would

be doing something else, actually working for a living.

And if consumers knew how far programming has declined,

then they would not buy computers

and most of the IT community would

be doing something else, actually working for a living.

So much of the IT community makes its living

on a perception arbitrage.

They will work hard to perpetuate the lie.

There are good, decent, honest, effective

computer professionals

and there are shysters and charlatans out there.

The proportion is

somewhere between

the legal profession and insurance salesmen.

The likelihood of a diatribe like this one

cleaning up the IT profession

is about the same

as a book on government corruption

cleaning up graft in congress.

It is up to each of us in the computing community,

providers and consumers,

to uphold these values ourselves

and to insist on them from others.

22:36:35 Mountain Standard Time

(MST).

2596 visits to this web page.